Ewarenow Blog

Costly Errors and Practices for Preventing Them

Detect and correct errors early in your design and development process.

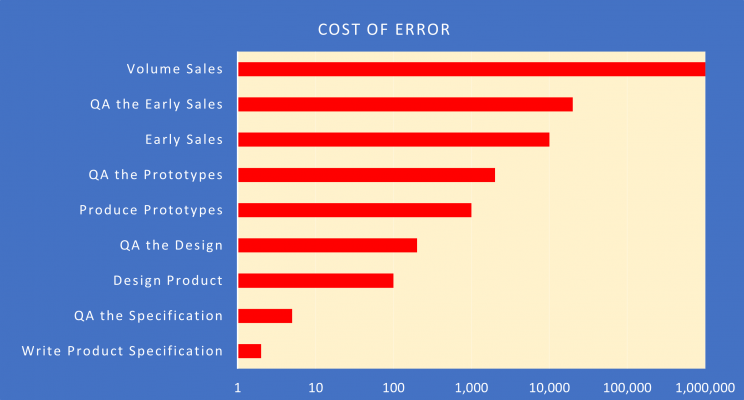

The later an error is detected, the higher the costs in money, resources, reputation, and even lives. Costs usually rise exponentially.

COSTLY ERRORS

Here are four real examples in decreasing cost sequence.

Boeing’s 737 MAX was grounded worldwide in March 2019 after two fatal crashes killed 346 passengers and crew. A major factor in both crashes was a software module called MCAS. Even before Covid-19 brought commercial aviation to a halt, the MCAS cost to Boeing had reached USD $20 billion and was still climbing. My April 2019 LinkedIn Article delved into the business culture that contributed to the debacle.

MCAS is a little software module that is only intended to turn on and automatically correct a supposedly rare flight condition. It was a tiny detail until it killed people. No one had apparently even dreamed of asking, “What could cause MCAS to malfunction and how can we prevent that?”

Samsung launched its Galaxy Note 7 smartphone in August 2016. One of the improvements over its Note 5 predecessor was a high-capacity Lithium Ion battery. By September 2016, there were cases of the battery catching fire. Ultimately Samsung withdrew it and issued refunds to all. Total cost was over USD $5 billion. Samsung’s smartphone engineering team apparently weren’t aware that Sony and Apple knew in 2006 that Lithium Ion batteries like to catch fire. Nor were they aware that Boeing rediscovered that fact in 2013 with its new 787 Dreamliner.

Understandably, the Samsung Note 7 engineering team and all the marketing folks were focused on exciting features and how to beat Apple. “The battery only supplies power. It doesn’t DO anything.” Apparently, no one asked, “Could anything cause the battery to catch fire and how can we prevent that?”

A PCB supplier received an order for 1000 boards along with the spec and Gerber files from an EMS for a new electronics product . The PCB supplier reviewed the package and passed it on to manufacturing. It received the boards, performed QA, found them acceptable, and delivered them to the EMS. The OEM pressured the EMS to hurry up, so no first-article process took place. All 1000 were populated. None worked. Circular firing squad (OEM, EMS, PCB supplier). Ultimately, instead of receiving USD $3,000 for the boards, the PCB supplier paid USD $180,000 to the EMS for the cost of the populated boards.

The electronics product failure was probably due to a flaw in the PCB design. The OEM’s engineering staff may unconsciously consider the PCB just to be, “A board to hold all the important things and wires to connect them together.”

The PCB supplier took to reviewing and often correcting risky PCB designs from OEMs before submitting the Gerber files to manufacturing.

Ewarenow developed a large, complex business app for a water testing laboratory to replace an obsolescent 20-year-old predecessor. It deployed the new product so that it could run in parallel with the old one. Key users submitted change requests leading to quick-response version upgrades. Some fixed bugs. Some made the app easier to use. Some, like this one, prevented errors.

The customer’s QA Officer discovered that users logging in new water samples for testing sometimes did not enter a legitimate date/time the sample was collected. That date/time is reported on the Certificate sent to the customer and some test result calculations depend on it being correct. Each illegitimate one can result in costly rework and customer dissatisfaction.

Diagnosis: The business app defaulted the sample collected date/time to ‘Now’ unless the user specifically overrode it. The business app was at fault. Cure: change the business app to require the user to enter the date/time and then verify that it is earlier than ‘Now’ and reasonable.

ERROR PREVENTION PRACTICES

We design and develop new products or new versions of existing products. They happen to be software products. Your business is unique and different, but similar QA practices may apply or may trigger your own thinking.

Murphy’s Law: “Anything that can go wrong will go wrong.”

At each stage in your process have the Quality Assurance (QA) do more than affirm that things are done right.

Deliberately consider things going wrong and identify ways to preclude that.

Don’t slack off on the “small stuff”.

QA the Specification and QA the Design

Peer Reviews: A development team member has authored a spec or design for a new module: what it will do, how it will do it, and how it will fit in the overall product.

The author convenes an online meeting with other members of the team. Puts the document up on the screen for all to see. Scrolls and talks through it. Other team members interrupt to ask questions of clarification. One occasional – and delightful – side effect is that the author stops to say, “Oops. That doesn’t make any sense. I’ll fix that.” After going through the document, the other team members ask questions and provide suggestions and new ideas. Many of the questions are constructively designed to challenge the spec or design.

The result is multiple eyes, ears, and minds on the spec or design for the module. High likelihood that any shortcomings have been found and corrected. And all participants now know quite a bit about that module

Expert Reviews: The product development team may ask for or be given access to a subject-matter expert when needed. That expert will review the team’s work and provide guidance or instruction, as necessary.

Focused Training: When a team member or the team needs some special training for the project, they obtain it, usually online, as part of their job.

Online Research: This is ingrained in our software engineers. When I ask one of them a question to which they don’t know the answer, they immediately say, “I’ll research that and get back to you on Skype, Doug” Next thing I know I’ve received the answer, supported by one or more URLs.

QA the Product

Here are our key practices for QA on the product itself. Some variant may work for you.

Module and Integration QA

For each product we have a series of “builds” that integrate all the modules into one master copy. The individual developer creates a new module or makes planned feature changes to an existing module. They “unit test” the module to ensure it works as specified. They submit it to the currently active build. A member of the QA staff conducts two levels of test on the module. Does it operate within the product as specified? Can I make it malfunction, or does it detect every such trick, deal with it appropriately, and not malfunction?

Acceptance Testing

When we are ready to deliver the new product or new version to the customer, we conduct our own internal Acceptance Test. Some test is done on each new or changed feature, using realistic production-type data. Sometimes the Acceptance Test leads to a few minor software tweaks by the development team before final packaging and delivery.

Thanks for taking the time to read this.

If you got here from LinkedIn, I’ll be grateful for your assessment in LinkedIn (Like, Comment, Share). Or you can respond via email on the Ewarenow Get In Touch page.